A branch of artificial intelligence and computer science, machine learning uses algorithms and data to copy how humans learn, in order to improve its accuracy. It’s a vital component of data science, as algorithms are trained through statistical methods in order to make predictions or classifications, helping to discover key insights.

These important insights are behind decision-making in businesses, impacting factors such as growth metrics. Machine learning was born from the theory that computers are able to learn without having to be programmed to undertake specific tasks and from pattern recognition. The iterative aspect is essential in machine learning, as it allows models to independently adapt as they’re introduced to new data.

In this guide, we’ll cover:

- How does machine learning work?

- A brief history of machine learning

- Machine learning vs deep learning vs neural networks

- Machine learning methods

- The applications of machine learning

How does machine learning work?

In order to create good machine learning systems, you need scalability, data preparation capabilities, ensemble modeling, algorithms, and automation and iterative processes. The learning system of a machine learning algorithm can be divided into three separate parts:

1. Decision process

Machine learning algorithms make predictions or classifications based on input data that is either labeled or unlabeled, which helps the algorithm estimate about patterns in the data.

2. Error function

These help to evaluate model predictions; with known examples, an error function is capable of making comparisons and accessing the model’s accuracy.

3. Model optimization process

Should the model need to fit better to data points in the training set, weights can be adjusted in order to reduce the discrepancy between both known examples and model estimates. Machine learning algorithms repeat the evaluation and optimization processes and autonomously update weights until they meet a set accuracy threshold.

Models can now analyze larger and more complex amounts of data, delivering quicker and more accurate results. Through building precise models, businesses can better identify opportunities and avoid risks.

A brief history of machine learning

1842: Ada Lovelace, the world’s first computer programmer, creates an algorithm - she describes a sequence of operations to help solve mathematical problems.

1936: Alan Turing theorizes how machines might decode and execute a set of instructions, with his published work becoming what is considered to be the basis for computer science.

1943: A neural network is modeled through electrical circuits, which becomes the base for computer scientists to apply in the 1950s.

1950: Alan Turing creates the ‘Turing Test’, which determines whether a computer has real intelligence. In order to pass this test, computers have to be able to fool humans into believing they’re also human.

1952: The first computer learning program is built by Arthur Samuel. Alongside the IBM computer, it improves the game of checkers the more times it’s played.

1957: The first neural network for computers, the perceptron, is designed by Frank Rosenblatt. It lets computers simulate how humans think.

1967: Computers start being able to do very basic pattern recognition as the ‘nearest neighbor’ algorithm is written. The algorithm could map routes for traveling salesmen, making sure they could visit random cities in a short time.

Learn more about how the machine learning landscape is shifting with this article based on a talk by Ajay Nair from Google.

1981: The concept of explanation-based learning (EBL) is introduced by Gerald Dejong. It lets computers analyze training data in order to create general rules to follow, through discarding unimportant information.

1999: Developed by the University of Chicago, the CAD Prototype Intelligent Workstation reviews 22,000 mammograms and detects cancer with 52% more accuracy than radiologists.

2006: Geoffrey Hinton, computer scientist, rebrands net research as ‘deep learning’.

2016: North Face becomes the first retailer to use IBM Watson’s natural language processing on a mobile app. It uses conversation like human employees as a personal shopper to help users find what they want.

Machine learning vs deep learning vs neural networks

As subfields of artificial intelligence, machine learning, deep learning, and neural networks are often used interchangeably, however, they have notable changes. Deep learning is a subfield of machine learning, and neural networks is a subfield of deep learning.

Machine learning vs deep learning

The difference between machine learning and deep learning is how each algorithm learns. With deep learning, the feature extraction bit of the process is automated, which gets rid of a part of needed human interaction and allows for larger data sets to be used. Deep machine learning is able to leverage labeled data sets, or supervised learning, to provide information to its algorithm. It doesn’t need any human intervention when processing data, which allows for different scalability of machine learning.

Non-deep machine learning depends more on human intervention, as it needs humans to determine a set of features to comprehend differences between various data inputs. It usually needs more structured data so that it can learn.

Machine learning and neural networks

Neural networks, also known as artificial neural networks (ANNs), are made of node layers, which have an input layer and an output layer. Each of the nodes, or artificial neurons, connect to other nodes, having an associated threshold and weight. The node is activated if a node output goes over a specified threshold value, and it sends information to the next layer of the network.

The ‘deep’ part in deep learning refers to the neural network layer depth. A neural network that has more than three layers can be seen as a deep neural network or a deep learning algorithm. If it has just two or three layers, then it’s just a basic neural network.

Feedforward neural networks can easily classify items - but how? Read more below:

Machine learning methods

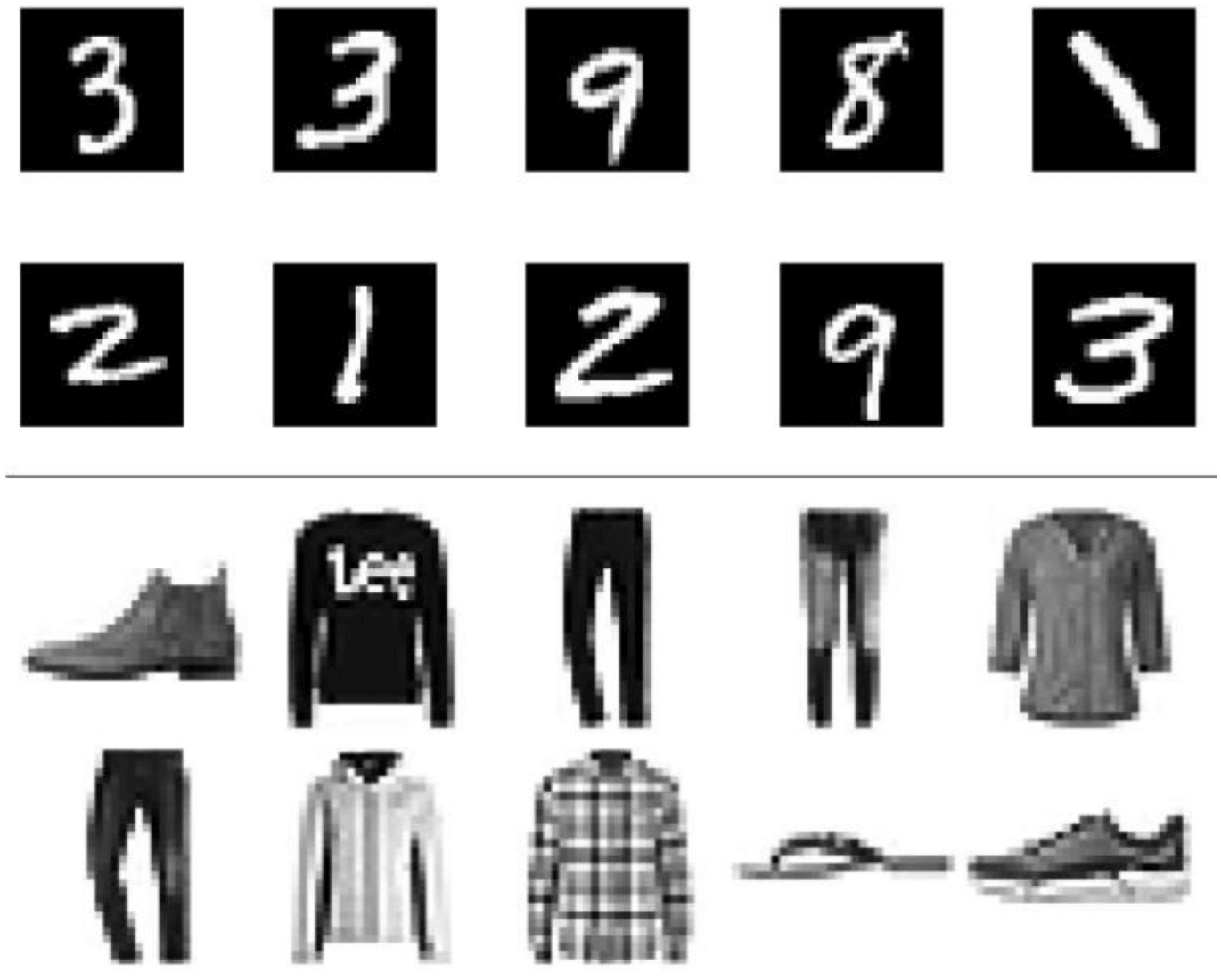

Supervised machine learning

Supervised machine learning is the use of labeled data sets that train algorithms to either classify data or predict outcomes accurately. The model is fed input data, and it continuously adjusts its weights until it’s appropriately fitted. This validation process step makes sure that the model won’t overfit or underfit.

Businesses can solve multiple real-world problems at scale, including the classification of spam email in its own folder. Supervised learning uses methods such as support vector machine, linear regression, neural networks, and more.

Unsupervised machine learning

It utilizes machine learning algorithms to find data grouping or hidden patterns. Because it finds both similarities and differences in information, it becomes a great way of cross-selling strategies, conducting exploratory data analysis, image and pattern recognition, and customer segmentation.

Unsupervised learning also reduces the number of features within a model by using dimensionality reduction - two common approaches in this are singular value decomposition (SVD) and principal component analysis (PCA). Unsupervised learning also uses neural networks, probabilistic clustering methods, k-means clustering, and more.

Semi-supervised machine learning

Offering a solution between supervised and unsupervised learning, semi-supervised learning uses a smaller data set when guiding classification and feature extraction from bigger, unlabeled data sets. It can also help solve the issue of insufficient labeled data to train supervised learning algorithms.

Reinforcement machine learning

This method interacts with the environment by producing actions and finding errors or rewards, with trial and error search and delayed reward being the more important components. Reinforcement machine learning lets machines and software agents automatically discover the ideal behavior within specific contexts so that performance can be optimized. A reinforcement signal is needed for the agent to know which action is the best one, which is done through simple reward feedback.

What are the main applications of machine learning?

Recommendation engines

By making use of past consumer behavior data, algorithms can find data trends to create cross-selling strategies that are more efficient. This is found through add-on suggestions in online retailers, like Amazon.

Speech recognition

Speech recognition, or speech-to-text, uses natural language processing (NLP) to process spoken human speech into text. This can be found in Apple devices with Siri, for example, which uses voice search.

Computer vision

Using convolutional neural networks, computer vision determines valuable information from visual inputs like videos and images so computers and systems can take appropriate actions. This can be found in social media through photo tagging and radiology imaging in healthcare.

Government

In public safety, for example, machine learning helps to mine multiple data sources for valuable insights. Sensor data can identify how to improve efficiency and save on costs while helping to detect fraud and minimize identity theft.

Customer service

Continuously replacing human employees, online chatbots answer frequently asked questions (FAQs) to offer a personalized service or cross-sell products. Virtual assistants and voice assistants are taking over customer service and freeing up human resources.

Transportation

In the transportation industry, it’s important to both analyze and identify trends and patterns, as it relies on the continuous efficiency of routes and on the prediction of potential issues that can affect profitability.

Financial services

Machine learning in the financial industry is helping to prevent fraud and identify important data insights. These insights can lead to investment or trading opportunities. Data mining can also highlight fraud signs or help to identify which clients have high-risk profiles.

Healthcare

Sensors and wearable devices assess patient health in real-time, alongside helping medical experts identify trends by analyzing data and identifying trends that can help improve treatment and diagnoses.

For more resources like this, AIGENTS have created 'Roadmaps' - clickable charts showing you the subjects you should study and the technologies that you would want to adopt to become a Data Scientist or Machine Learning Engineer.

Follow us on LinkedIn

Follow us on LinkedIn