Organizations of all sizes are racing to deploy generative AI to help drive overall efficiency and remove costs from their businesses. As with any new technology, deployment generally comes with barriers that can stall progress and implementation timelines.

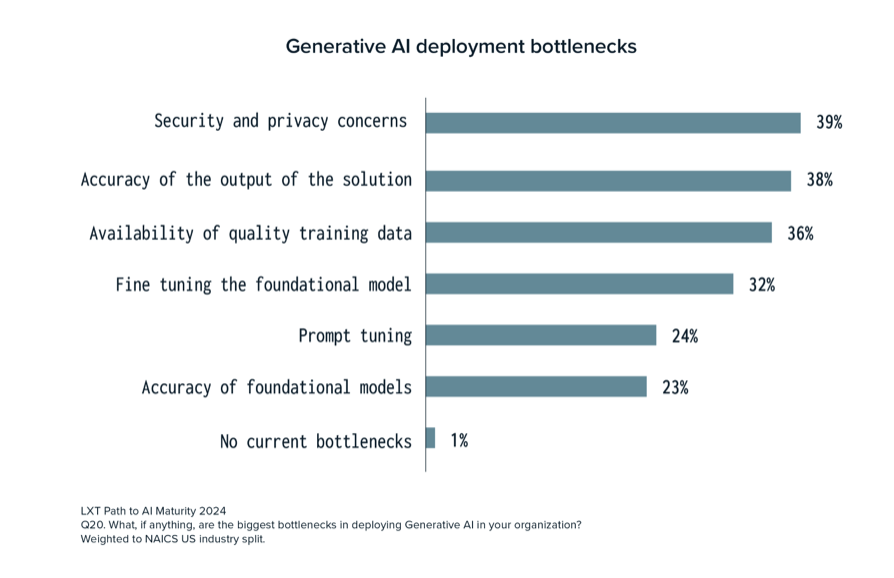

LXT’s latest AI maturity report reflects the views of 315 executives working in AI and reveals the top bottlenecks companies face when deploying generative AI.

These include:

- Security and privacy concerns

- Accuracy of the output of the solution

- Availability of quality training data

- Fine-tuning the foundational model

- Prompt tuning

- Accuracy of foundational models

Only 1% of respondents stated they were not experiencing any bottlenecks with their generative AI deployments.

1. Security and privacy concerns

39% of respondents highlighted security and privacy concerns as their top bottleneck in deploying generative AI. This is not surprising as generative AI models require a large amount of training data. Companies must ensure proper data governance to avoid exposing personal data and sensitive information, such as names, addresses, contact details, and even medical records.

Additional security concerns for generative AI models include adversarial attacks where bad actors cause the models to generate inaccurate and even harmful outputs. Finally, care must be taken to ensure that generative AI models do not generate content that mimics intellectual property which could lead to legal hot water.

Companies can mitigate these risks by obtaining consent from anyone whose data is being used to train its generative AI models, similar to how consent is obtained when using individuals’ photos on websites. Additional data governance procedures should be implemented as well, including data retention policies and redacting personally identifiable information (PII) to maintain individual confidentiality.

2. Accuracy of the output of the solution

Neck and neck with security and privacy concerns, 38% of respondents stated that the accuracy of generative AI’s output is a top challenge. We’ve all seen the news articles about chatbots spewing out misinformation and even going rogue.

While generative AI has immense potential to streamline business processes, it needs guardrails to eliminate hallucinations, ensure accuracy, and maintain customer trust. For example, companies should have 100% clarity on the source and accuracy of the data being used to train their models and should maintain documentation on these sources.

Further, human-in-the-loop processes for evaluating the accuracy of training data, as well as the output of generative AI systems, are crucial. This can also help reduce data bias so that generative AI models operate as intended.

3. Availability of quality training data

High-quality training data is essential, as it directly impacts AI models’ reliability, performance, and accuracy. It allows models to make better decisions and create more trustworthy outcomes.

36% of respondents in LXT’s survey stated that the availability of high-quality training data is a challenge with generative AI deployments. Recent press even has highlighted that human-written text could be used up for chatbot training by 2023. This bottleneck prevents companies from being able to scale their models efficiently, which then impacts the quality of their output.

When it comes to deploying any AI solution, the data needed to train the models should be treated as an individual product with its own lifecycle. Companies should be deliberate about planning for the amount and type of data they need to support the lifecycle of their AI solution. A data services partner can provide guidance on data solutions that will create optimal results.

4. Fine-tuning the foundational model

Fine-tuning includes improving open-source, pre-trained foundation models, which often incorporate instructional fine-tuning, reinforcement learning with human or AI feedback (RLHR/RLAIF), or domain-specific pre-training.

32% of respondents stated that fine-tuning the foundational model can be a challenge when deploying generative AI, as it requires a deep understanding of large foundation model (LFM) training improvements and transformer models. To fine-tune models, companies must also have employees who can speed up training processes using tools for multi-machine training and multi-GPU setups, for example.

However, despite its potential gains, fine-tuning has several issues. Post-training inaccuracies can increase, the model can be overloaded with large domain-specific corpora and have minimized generalization ability, and more.

To combat potential issues in fine-tuning models, reinforcement learning and supervised fine-tuning are useful methods, as they help to remove harmful information and bias from responses that LLMs generate.

5. Prompt tuning

Prompt tuning is a technique that adjusts the prompts that inform a pre-trained language model’s response without a complete overhaul of its weights. These prompts are integrated into a model’s input processing. 24% of respondents stated that prompt tuning presents challenges when deploying generative AI.

Prompt tuning is just one method that can be used to improve an LLM’s performance on a task. Fine-tuning and prompt engineering are other methods that can be used to improve model performance, and each method varies in terms of resources needed and training required.

In the case of prompt tuning, this method is best suited for maintaining a model’s integrity across tasks. It does not require as many computational resources as fine-tuning and does not require as much human involvement compared to prompt engineering.

Companies deploying generative AI should evaluate their use case to determine the best way to improve their language models.

6. Accuracy of foundational models

23% of respondents in LXT’s survey said that the accuracy of foundational models is a challenge in their generative AI deployments. Foundational models are pre-trained to perform a range of tasks and are used for natural language processing, computer vision, speech processing, and more.

Foundational models provide companies with immediate access to quality data without having to spend time training their model and without having to invest as heavily in data science resources.

There are some challenges with these models, however, including lack of accuracy and bias. If the model is not trained on a diverse dataset, it won’t be inclusive of the population at large and could result in AI solutions that alienate certain demographic groups. It’s critical for organizations using these models to understand how they were trained and tune them for better accuracy and inclusiveness.

Get more insights in the full report

With the rapidly evolving field of AI, keeping up-to-date with the trends and developments is essential for success in AI initiatives. LXT’s Path to AI Maturity report gives you a current picture of the state of AI maturity in the enterprise, the amount of investment made, the top use cases for generative AI, and much more.

Download the report today to access the full research findings.

Follow us on LinkedIn

Follow us on LinkedIn