A groundbreaking research paper introduces a clever solution to one of AI's thorniest problems: accountability in multi-agent systems.

As organizations increasingly deploy AI architectures where multiple specialized agents collaborate to produce outputs, determining which agent contributed what becomes nearly impossible when things go wrong.

The accountability crisis in collaborative AI

Picture this scenario: A financial advisory AI system, composed of multiple specialized agents working together, provides investment advice that leads to significant losses. The system includes a market analysis agent, a risk assessment agent, a portfolio optimization agent, and a summary generation agent.

When regulators investigate, they discover the company's execution logs have been deleted. Without these logs, there's no way to determine which agent made the critical error.

This isn't a hypothetical problem. As multi-agent systems become standard in industries from healthcare to autonomous vehicles, the inability to trace accountability poses serious legal and ethical challenges.

Current systems rely entirely on external logging infrastructure to track agent interactions. But logs can be corrupted, deleted, or simply unavailable due to privacy constraints.

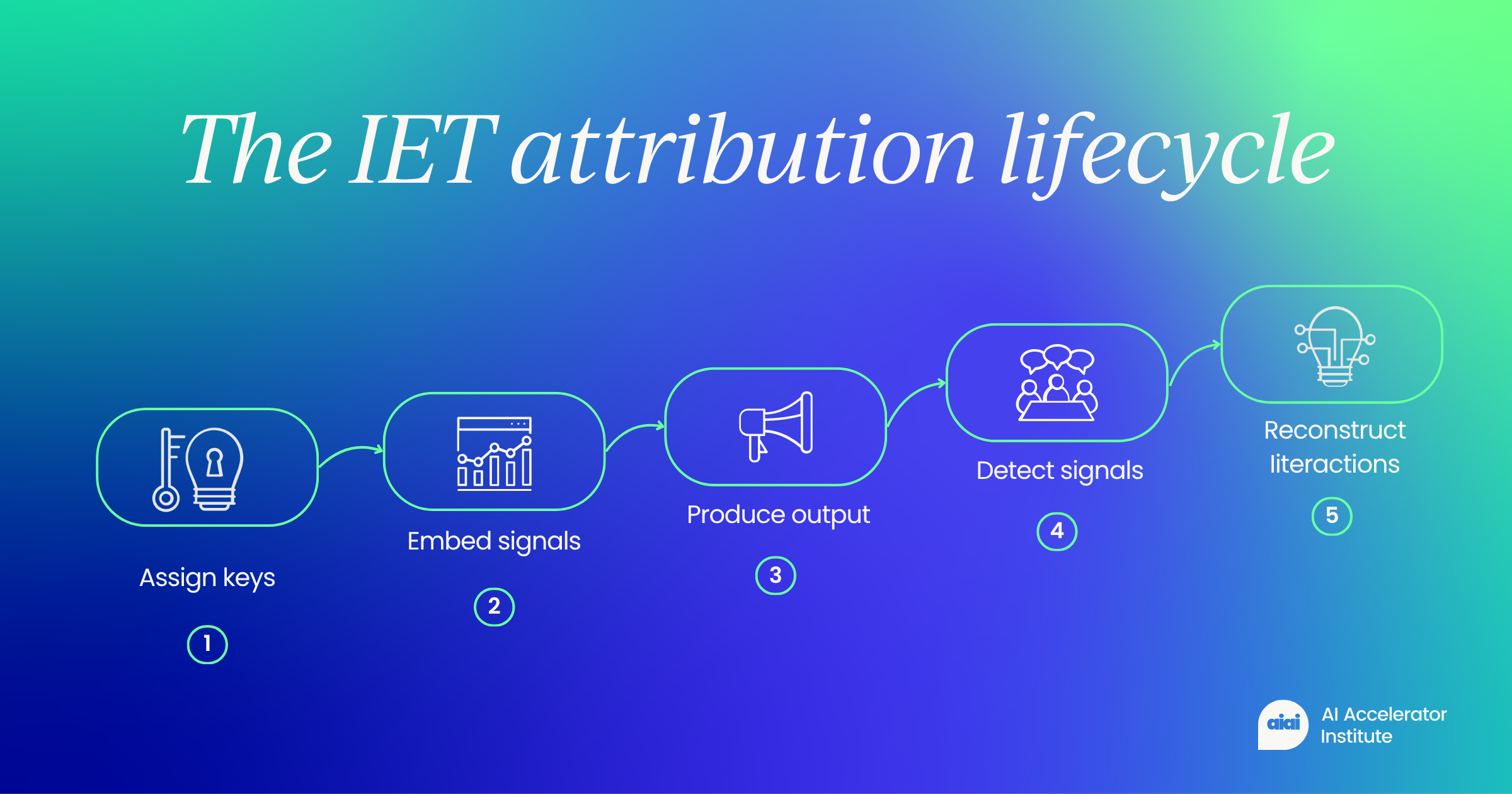

How IET transforms text into a self-documenting audit trail

The core innovation of IET lies in its ability to modify token probability distributions during text generation. Each agent in a multi-agent system receives a unique cryptographic key.

When an agent generates text, the IET framework subtly adjusts the probability of selecting certain tokens in ways that embed the agent's signature.

These modifications are carefully calibrated to be statistically significant enough for algorithmic detection while remaining completely invisible to human readers. The text reads naturally and maintains its quality, but it now carries hidden metadata about its creation process.

Think of it like watermarking a document, but at a much more granular level. Instead of marking an entire document as coming from one source, IET can identify which specific words, sentences, or paragraphs each agent contributed.

More importantly, it can detect the exact moments when control passes from one agent to another.

The detection process employs what the researchers call "transition-aware scoring." An auditor with access to the secret keys can scan the final text and algorithmically identify:

- Which agent generated each segment of text

- The precise handover points between agents

- The complete interaction topology showing how agents delegated tasks and refined each other's work

Reconstructing the collaboration graph from text alone

One of IET's most impressive capabilities is its ability to reconstruct complex interaction patterns. Modern multi-agent systems rarely follow simple linear workflows. Instead, they involve intricate patterns of delegation, revision, and synthesis.

Traditional logging would require storing detailed records of each interaction. With IET, this entire collaboration graph can be recovered from analyzing the signal transitions within the final code output.

The researchers demonstrated that their system could accurately recover agent segments and coordination structures while preserving the quality of the generated text.

In their experiments, the embedded signals didn't degrade the fluency or utility of the outputs, addressing a critical concern about whether such attribution systems might compromise performance.

Privacy preservation through cryptographic design

IET incorporates privacy by design through its use of cryptographic keys. The attribution signals embedded in text are only detectable by holders of the corresponding secret keys.

To unauthorized observers, the text appears completely normal, with no indication that it contains hidden attribution data.

This feature addresses a crucial balance in AI deployment. Organizations need accountability mechanisms for safety and compliance, but they also need to protect proprietary information about their AI architectures. IET allows for post-incident forensic analysis without exposing the internal structure of AI systems during normal operation.

The privacy-preserving nature of IET also enables selective disclosure. Different stakeholders can be given different levels of access to attribution information based on their authorization level and need to know.

Beyond logging: Making AI systems inherently auditable

The implications of IET extend far beyond solving the immediate problem of lost logs. By making attribution an inherent property of AI-generated content rather than relying on external record-keeping, the technology fundamentally changes how we approach AI accountability.

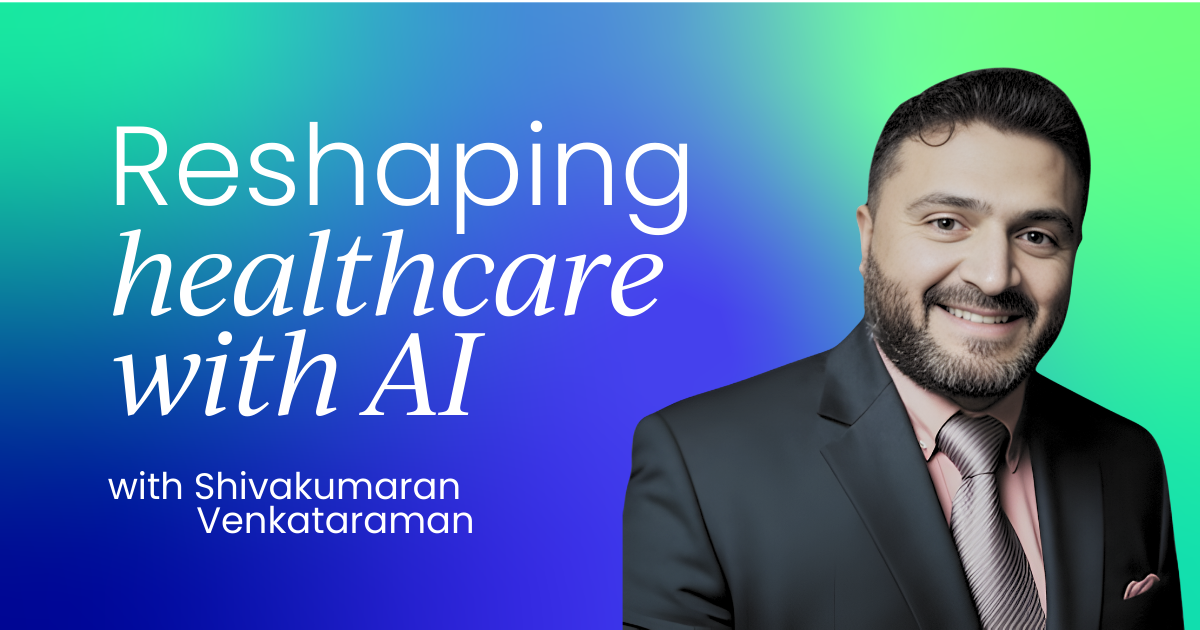

In healthcare, where AI systems increasingly assist with diagnosis and treatment recommendations, IET could enable precise attribution of medical advice to specific AI components.

If a diagnostic error occurs, investigators could determine whether the fault lay with the symptom analysis agent, the medical literature synthesis agent, or the recommendation formulation agent.

The financial sector, where AI systems handle everything from fraud detection to trading decisions, could use IET to meet regulatory requirements for explainability and accountability. Regulators could audit AI decisions after the fact without requiring companies to maintain extensive logging infrastructure.

The future of accountable AI

IET represents a significant advance in AI watermarking technology, moving beyond simple human versus AI detection to enable granular attribution within AI systems. As multi-agent architectures become more prevalent, such attribution mechanisms will become essential infrastructure.

The research opens several avenues for future development. Current IET implementation focuses on text, but similar principles could apply to other modalities like images or audio generated by collaborative AI systems.

Researchers might also explore how to make attribution signals robust against adversarial attacks while maintaining their subtlety.

Perhaps most importantly, IET demonstrates that accountability doesn't have to be an afterthought in AI system design. By building attribution directly into the generation process, we can create AI systems that are inherently auditable, making them safer and more trustworthy for deployment in critical applications.

As AI systems grow more complex and autonomous, technologies like IET will be crucial for maintaining human oversight and accountability. The ability to trace decisions back to their source, even when traditional audit trails fail, represents a fundamental requirement for the responsible deployment of AI at scale.

Follow us on LinkedIn

Follow us on LinkedIn