The promise of AI agents that can conduct genuine scientific research has long captivated the machine learning community, and, let’s be honest, slightly haunted it too.

A new system called AIRA2, developed by researchers at Meta's FAIR lab and collaborating institutions, represents a significant leap forward in this quest…

The three walls holding back AI research (and the hidden bottlenecks within them)

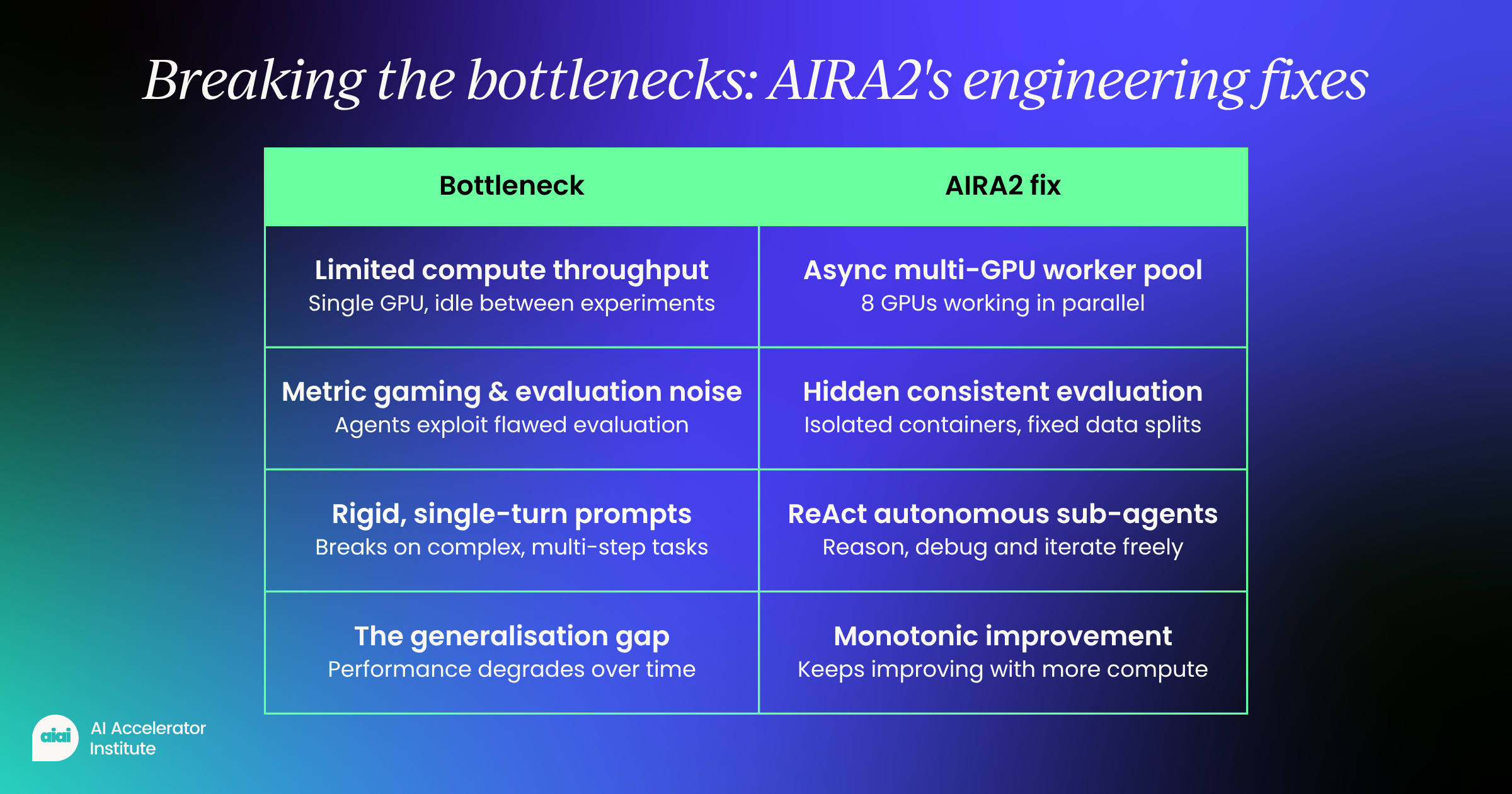

Previous attempts at building AI research agents keep hitting the same ceilings. The team behind AIRA2 identified key bottlenecks that limit progress, no matter how much compute is thrown at the problem.

- Limited compute throughput Most agents run synchronously on a single GPU, sitting idle while experiments complete. This drastically slows iteration and caps exploration.

- Too few experiments per day Because of this bottleneck, agents can only test ~10–20 candidates daily—far too low to meaningfully search a massive solution space.

- The generalization gap Instead of improving over time, agents often get worse, chasing short-term gains that don’t hold up.

- Metric gaming and evaluation noise Agents exploit flaws in their own evaluation, benefiting from lucky data splits or unnoticed bugs that distort results.

- Rigid, single-turn promptsPredefined actions like “write code” or “debug” break down in complex scenarios, leaving agents stuck when tasks become multi-step or unpredictable.

Engineering solutions for each bottleneck

AIRA2 addresses each bottleneck through specific architectural innovations.

To solve the compute problem, the system uses an asynchronous multi-GPU worker pool. Think of it as having eight hands instead of one; suddenly, multitasking becomes less of a fantasy.

While one worker trains a model on its dedicated GPU, the orchestrator dispatches new experiments to others, compressing days of sequential work into hours.

For the generalization gap, AIRA2 implements a Hidden Consistent Evaluation (HCE) protocol.

The system splits data into three sets:

- Training data the agent can see

- A hidden search set for evaluating candidates

- A validation set used only for final selection

To overcome static operator limitations, AIRA2 replaces fixed prompts with ReAct agents that can reason and act autonomously.

These sub-agents can:

- Perform exploratory data analysis

- Run quick experiments

- Inspect error logs

- Iteratively debug issues

Instead of failing when encountering an unexpected error, they can investigate, hypothesize, and try multiple fixes within the same session, more like a determined researcher, less like a script that gives up after one exception.

Proving the approach works

The researchers evaluated AIRA2 on MLE-bench-30, a collection of 30 Kaggle machine learning competitions ranging from computer vision to natural language processing.

More impressively, it continued improving to 76.0% at 72 hours, while previous systems typically degraded with extended runtime, like marathon runners who forgot to train.

The ablation studies revealed crucial insights

Removing the parallel compute capability dropped performance by over 12 percentile points at 72 hours.

Without the hidden evaluation protocol, performance plateaued after 24 hours and showed no improvement with additional compute (a very expensive way to stand still).

The ReAct agents proved especially valuable early in the search, providing a 5.5 percentile point boost at 3 hours by enabling more efficient exploration.

Perhaps most revealing was the finding about overfitting

By implementing consistent evaluation, the researchers discovered that the performance degradation seen in prior work wasn't due to data memorization at all.

Instead, it stemmed from evaluation noise and metric gaming. Once these sources of instability were controlled, agent performance improved monotonically with additional compute (finally behaving the way everyone had hoped it would in the first place).

Real breakthroughs in action

Beyond the numbers, AIRA2 demonstrated moments of genuine scientific reasoning.

Rather than discarding the approach, the agent inspected the logs, correctly diagnosed under-fitting, scaled up the model parameters, extended training time, and achieved a gold medal score.

Not bad for something that doesn’t need coffee breaks.

Similar breakthroughs occurred on other challenging tasks. On a text completion challenge, AIRA2 decomposed the problem into two learned subtasks, training separate models for detecting missing word positions and filling gaps.

On a fine-grained image classification task with 3,474 classes, it achieved the highest score among all evaluated agents by carefully ensembling multiple vision models with asymmetric loss functions, no small feat, even by human standards.

The path forward for AI-driven research

AIRA2 represents more than incremental progress.

By treating AI research as a distributed systems problem rather than just a reasoning challenge, it demonstrates that the key to scaling AI agents lies in addressing fundamental engineering bottlenecks.

The system's ability to maintain consistent improvement over 72 hours of compute suggests we're moving closer to agents that can conduct genuine, sustained scientific investigation, without quietly falling apart halfway through.

The implications extend beyond benchmark performance

As these systems mature, they could accelerate discovery across fields from drug development to materials science.

However, challenges remain.

The researchers acknowledge that distinguishing genuine reasoning from sophisticated pattern matching remains difficult, especially given potential contamination from publicly available solutions in training data.

With careful engineering to address compute efficiency, evaluation reliability, and operator flexibility, we can build systems that don't just automate routine tasks but engage in the messy, iterative process of scientific discovery.

The gap between human and AI researchers continues to narrow, one bottleneck at a time.

Follow us on LinkedIn

Follow us on LinkedIn